Common Name: Monkshood, Helmet-flower, Wolfsbane, Devil’s Helmet, Aconite – Like many common names, Monkshood is descriptive, emphasizing a feature recognizable as a head covering like a hood or a helmet. Aconite reflects the well-deserved reputation of the plant as a poison and therefore a bane to wolves. Aconite is known as the queen of poisons.

Scientific Name: Aconitum uncinatum – There is little doubt that the generic name Aconitum is taken directly from the Greek word akoniton, but it is not clear what it meant in ancient Greece. By direct translation, konis means “dust”, so a-konis is “without dust.” [1] It has been suggested that the places where the plant grows (like the rocky shores of the Greek archipelago), were literally without dust. The confusion is attributable to the cultural importance (and names) of the plant millennia before historical accounting. Uncinate means bent at the tip like a hook, a direct derivative in English from the Latin (later French) uncinatus.

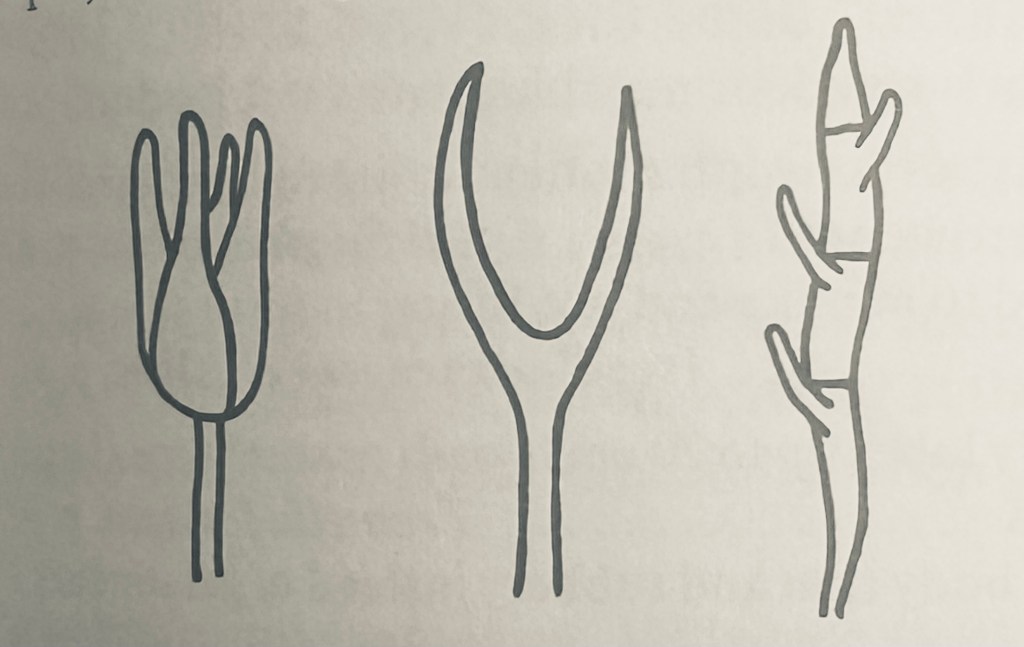

Potpourri: Monkshood has one of the best descriptive names among flowers. The arched petals that extend over the stamen and stigma complex of the flower’s reproductive organs is similar in form, if not function, to the cowl of the cassock worn by monks to emphasize religious piety and plainness; there is no need for a mnemonic. While the flower is not all that common in North America (except as a garden varietal), it is much more so in the Mediterranean basin, where it rose to prominence in prehistorical accounts due to its use as both a medicinal in small doses and as a deadly poison above a low lethal threshold. Its unusual shape can only have evolved to attract a particular pollinator, as flowers can have no other function that to employ the mobility of animals to transfer the male pollen to the female ovary. The plant itself is spindly, depending on the support of other adjacent plants [2]. This too is a matter of successful evolution, midway between a free-standing shrub and a draped, climbing vine. A smaller, white variant called Trailing Wolfsbane (A. reclinatum) is also found in the southern Appalachians.

Monkshood is a member of the buttercup family (Ranunculaceae) which gives its name to the order Ranunculales, which means small frog in Latin due to the frog-like, or ranine shape, of the type species’ leaves. According to the latest DNA iteration to the three-hundred-year-old (and increasingly obsolete) taxonomy of Linnaeus, the plants of the buttercup order are among the earliest lineages of those with flowers. When, where, and how life emerged from the sea onto the forbidding shores of terra firma is a matter of ongoing conjecture. What is clear is that the encased seed angiosperms came later, eventually evolving the improbable shapes and colors of flowers. This was evident to Darwin, who wrote that “flowers rank amongst the most beautiful productions of nature” and could not have evolved “if insects had not been developed on the face of the earth.” [3] One may conclude that monkshoods evolved vibrant colors and an overhanging petal because that configuration (and possibly scent) drew a specific pollinator that fertilized the plants to which it was attracted and perpetuated the “intelligent design.”

The angiosperms split into two primary clades as they spread across Pangaea in the Cretaceous Period about 130 million years ago. The monocots (single seed leaf or cotyledon) having parallel veins like grasses, orchids, and lilies comprise about one quarter of the angiosperms while the eudicots (formerly dicots) with veined leaves and two cotyledons comprise most of the remainder. [4] The buttercup family was one of the earliest branches of the eudicot clade as diversification increased with dispersal to new habitats. Based on DNA analysis, the order Ranunculales is “sister to all remaining eudicots” [5] This phylogenic analysis is supported by the recent discovery of an intact fossil of a mature eudicot plant that included five stems and one intact flower that appears to have been a type of buttercup. Based on half-life measurements of the radioactive decay of argon and uranium in the surrounding soil, the flower bloomed about 127 million years ago. [6] The buttercups are then among the oldest of all the flowering plants. Their survival through the ages implies an early evolutionary trait that promoted their propagation – like toxins.

Many of the plants of the buttercup family are noted for their poisonous metabolites, even the common buttercup (Ranunculus acris). A popular field guide states “all native species of buttercup contain toxic or otherwise irritating oils and should not be eaten.” [7] However, since plants produce toxins almost certainly to protect against microbial and insect predation, the repurposing of plant toxins as herbal remedies has been common practice since antiquity. An equally popular field guide for medicinal herbs promotes buttercup leaves as “external rubefacient in rheumatism, arthritis, and neuralgia” while in the same breath warning “extremely acrid, causing intense pain and burning in mouth … blisters skin.” Native Americans used a poultice made from buttercup roots to treat abscesses and boils. [8] Monkshood produces the toxin aconite, named for the genus, which has been used as both a deadly poison for killing and a valued pharmaceutical for healing.

Pliny the elder, the storied Roman military commander who died while attempting to rescue victims of the eruption of Mount Vesuvius in 79 AD, provides one of the earliest accounts of aconite in his seminal Natural History. Citing its Homeric mythological origins from the foaming mouth of the three-headed dog Cerberus that guarded the entrance to Hades, he relates that in “countries frequented by the panther, they rub meat with aconite, and if one of those animals should but taste it, its effects are fatal: indeed, were not these means adopted, the country would soon be over-run by them.” But it was equally well established that aconite was also useful as a treatment, pointing out that aconite in mulled wine neutralized the venom of a scorpion bite. Citing in general that “such is the nature of this deadly plant, that it kills man, unless it can find in man something else to kill,” he promotes it as “remarkably useful ingredient in compositions for the eyes.” In anticipation of the Doctrine of Signatures, Pliny praises the “deity who has made to us these numerous revelations for our practical benefit.” [9]

The fall of the Roman Empire to Germanic invaders in the fifth century ushered in the so-called Dark Ages; the repository of knowledge from the Greeks and later the Arabs was largely forgotten until the Renaissance rebirth a thousand years later. The aconite of Monkshood, however, was well known to even the pagan peoples and used mostly as a poison but also as a beneficial herb throughout antiquity. Practices vary from place to place, but it was reportedly used to fatten geese in eastern Europe, as a salad ingredient in Sweden, as a treatment for fever and joint pain in Germany, and as a remedy for the rhematic gout of King Charles IV of Spain. Poisoning was more common, with accounts ranging from killing unwanted people, like “the old men of Ceos, when no longer useful to the state” to poisoning the water supply of enemy states. [10] At the beginning of the modern era in the sixteenth century, the medicinal benefits of Monkshood had been supplanted by the evil of its poison. John Gerard provides an account of “certain ignorant persons” serving up a salad of Wolfsbane in Antwerp resulting in the “cruel” deaths of all patrons with swollen tongues, bulging eyes, and “their wits taken from them.” In conclusion, he notes that “there hath been little heretofore set down about the virtues of aconites.” [11]

Aconite is one of many plant alkaloids, hydrocarbon chemicals produced through evolutionary mutation usually but possibly not always, to enhance survival. The first plant alkaloid was discovered in 1804 by the German chemist Frederich Serturner who extracted a substance from opium poppies which he named morphium, for the Greek god of dreams Morpheus. The term alkaloid was coined in 1819 due to the observation that dissolving one of the chemicals in water yielded an alkaline solution (i.e. PH > 7.0). Over the course of the next half century, strychnine, caffeine, quinine, nicotine, atropine, aconite, and cocaine were identified by chemists, all potent and some poisonous. Aconite became known as the “queen of poisons” because it is fast acting, causing death in less than an hour, and because it was essentially impossible to detect before the development of sophisticated laboratory analysis techniques over the last century. Its reputation as one of the preeminent poisons of antiquity is warranted.

Aconite kills by interrupting the sodium channels that open and close to allow for ionic transport to reset the electrical potential of nerve and heart cells. In one of biochemistry’s more unusual mechanisms, nerve and heart cells must reset after every signal and beat by the exchange of positively charged potassium ions and negatively charged sodium ions. The resetting of the electrical charge, called depolarization, is truly the spark of life. The symptoms of aconite poisoning start with vomiting and diarrhea as the stomach and then the small intestine react to the poison. Once in the blood stream, all sensation gradually ceases, manifest by numbness and ultimately by the inability to breathe exacerbated by heart palpitations. The only question is whether death will ensue by asphyxiation due to paralysis of the diaphragm or cardiac arrest. The effect of aconite on nerves explains why it is also useful as an analgesic. Pain is a product of sensory nerves. Applying aconite in low doses topically alleviates pain. The problem is that the dose level for pain mitigation is not too far removed from the lethal dose.

One of the more interesting criminal cases involving the use of aconite as poison involved a murderer seeking to eliminate the other heirs to an inheritance. Geroge Lamson was betrothed to an orphaned daughter of a wealthy British merchant which made him entitled to her portion of the money, shared with three other siblings. When one of the siblings died, Lamson, in debt due to heroin addiction, realized he could become solvent again by doing away with another. Lamson, who was a physician, purchased a small amount of aconite from a pharmacist, presumably for use as pain medication. He arranged a visit with his nephew Percy at his boarding school, bringing a Scottish Dundee cake laced with aconite to afternoon tea. Percy lost consciousness and died that evening. Suspicion fell on Lamson, who was ultimately convicted based on the injection of a sample of Percy’s urine into a mouse which died in half an hour and due to the testimony of the pharmacist that had sold the aconite to Lamson. He was hung on 28 April 1882 at London’s Wandsworth Prison. [12] According to the “eye for an eye” biblical prescription, a dose of aconite would have been a more just retribution for his crime.

References:

1. Webster’s Third New International Dictionary of the English Language, Unabridged, G. M. Merriam and Company, Chicago, 1971, p. 18

2. Niering, W. and Olmstead, N. National Audubon Society Field Guide to North American Wildflowers Alfred A. Knopf 1998 pp 374-375.

3. Darwin, C. On the Origin of Species, Easton Press Norwalk, Connecticut, 1976, p 165.

4. Wilson, C. and Loomis, W. Botany, Fourth Edition, Holt Rhinehart and Winston, New York, 1957, pp. 274, 373, 571-577.

5. Moore, M. et al “Phylogenetic analysis of 83 plastid genes further resolves the early diversification of eudicots” Proceedings of the National Academy of Scientists, Volume 107 Number 10, 22 February 2010, 99 4623-4628 .https://pmc.ncbi.nlm.nih.gov/articles/PMC2842043/

6. Carpenter, J. “Science Shot, The Oldest Buttercup Yet” Science, 30 March 2011

7.Elias, T. and Dykeman, P. Edible Wild Plants, Sterling Publishing Company, New York. P 262.

8.Duke, J. and Foster, S. Medicinal Plants and Herbs, Houghton Mifflin Co., New York, p 123.

9. Pliny. “Chapter 2. Aconite, Otherwise Called Thelyphonon, Cammaron, Pardalianches, or Scorpio; Four Remedies.”. The Natural History of Pliny, Book XXVII. Translated by Bostock, John; Riley, Henry T. p. 218.

10. Jackson, R. “Notes on the History, Properties, and Uses of Aconitum napellus” The Lancet. 3 May 1856 Volume 67 Number 1705

11. Gerard, John. The Herball or Generall Historie of Plantes (1st ed.) 1597, London: John Norton. P 111-114

12. Bradbury, N. A Taste for Poison, Saint Martin’s Press, New York, 2021, pp 92-116.