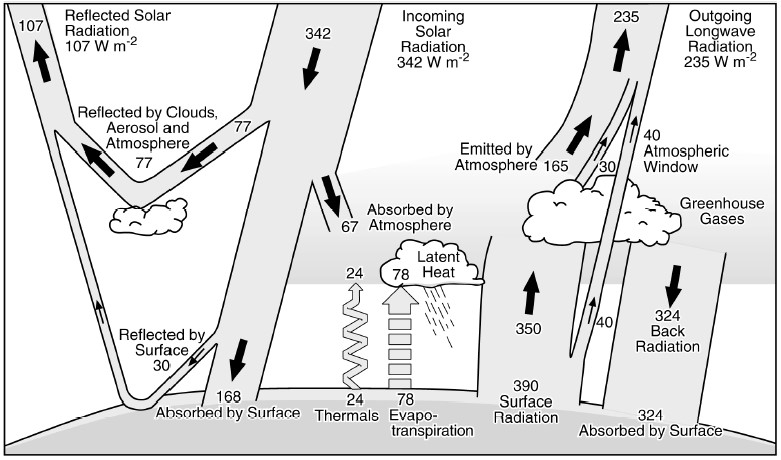

It is tempting to think of solar energy as panacea for the climate change problem in providing limitless carbon free electricity. The sun has been radiating the power of fusion for 4.6 billion years. While only a small percentage is directed toward the Earth, it is enough to have sparked the evolution of life that it has since sustained. It is the source of the energy of coal, natural gas and oil and the font of photosynthesis on which plants, fungi, and animals all ultimately depend. The Panglossian fix to the global warming problem is to stop using the sunlight energy stored as fossil fuel and start collecting sunlight energy directly. Back of the envelope calculations provide that the sun’s nominal one kilowatt per square meter power provided globally is more than adequate. Take any large tract of sun-drenched desert, fill it with solar panels―voila, case closed. The Sahara is usually the desert of choice due to its size and sunlight extremes. The energy falling within its torrid borders is three million billon watts per day, two hundred times the current global energy demand. Similarly, the western deserts of North America could be empaneled to produce fifty times the energy needs of the United States. [1]

Why this is not really the case is a matter of chemistry, physics, engineering, and economics. Solar panels are called photovoltaic (PV for short) because they collect sunlight energy (photo) and convert it to electrical current generated by a voltage gradient (voltaic). The individual solar cell is the sine qua non for a solar system of energy to supply electricity to the grid. Solar cells have their origin in research into the properties of semiconductors that also led to the development of transistors in the 1950s. A semiconductor is any material that has a conductivity between that of metals such as copper and insulators such as glass. The former conducts electrons readily and the latter impedes their movement. Resistance is the inverse of conductance; metals have low resistance and insulators have high resistance. Semiconductors are elements, notably silicon and germanium, that have the number and arrangement of electrons that is favorable to the generation and transport of a relatively small electrical current that can be controlled with high precision.

The chemistry of semiconductors is established by electrons. A fundamental property of science is that the components of any system will gravitate to a condition of greater stability, which is generally at the lowest energy level. This propensity is manifest in the chemical bond, as the electrons in the outermost or valence subshell of an atom seek to establish a stable state. The idea that stability at the ground, lowest energy state was the basis for all chemical bonding was suggested by the noble or inert gases (helium, neon, argon, krypton, xenon, and radon) that don’t combine with anything else. Argon, the first inert gas to be discovered in 1894 by Lord Rayleigh and Sir William Ramsay as a mysterious trace element in air which is otherwise nitrogen and oxygen, was named for the Greek word argos, which means “lazy.” In 1923, the American chemist Gilbert Lewis proffered the eponymous Lewis theory of chemical bonding that has four fundamental tenets: (1) elements enter into compounds so as to share or exchange electrons; (2) in some cases, the electrons are transferred from one atom to another (an ionic bond); (3) in some cases, the electrons are shared between the two atoms (a covalent bond); and (4) each of the constituent atoms ends up with an “inert gas” outermost, or valence, electron shell.

The periodic table is arranged according to the progressive filling of electron shells with elements exhibiting similar characteristics in vertical columns called Groups numbered left to right from I to VIII (1 to 8). The inert gases are located on the far right. The elements that range across the middle are called metals and those that are near the inert gases on the right are called non-metals. In between metals and nonmetals are a smaller group of transition elements called the metalloids that exhibit both metal and non-metal properties. [2] The semiconductors are metalloids in the same group as carbon (Group IV) with the same bonding characteristics. Carbon is perhaps the most versatile of all elements due to its need for four electrons to complete its outer shell to the inert and stable configuration. It must therefore combine with four other elements by sharing electrons in covalent bonds. The entire field of organic chemistry concerns carbon compounds, the basis for life. If the four combining elements are also carbon atoms, the resultant combination is diamond, the hardest natural material known. The versatility of carbon bonding is shared by the semiconductors silicon and germanium that lie just below it in the periodic table―they also form four covalent bonds. Since the shells that contain the valence electrons in these elements are further away from the nucleus (higher energy states) than carbon, they can more readily be moved into a conducting state. The propensity of semiconductors to release an electron for use in and electrical circuit is enhanced by the addition of elements on either side (Group III or V) into a bonding arrangement, a process called doping. [3] Solar cells are made from doped semiconductors.

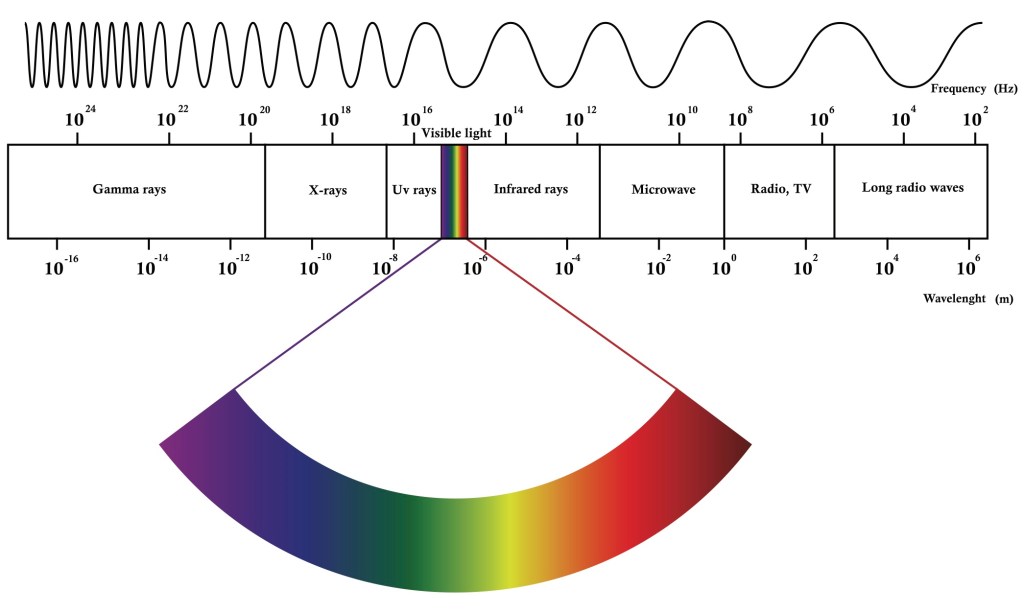

The physics of solar cell semiconductors is based on the observed phenomenon that radiant energy in the form of photons impinging on some surfaces will result in a flow of electrons. The German physicist Heinrich Hertz named it the photoelectric effect in 1887 after observing that ultraviolet light changed the voltage at which sparking occurred between a pair of metallic electrodes. By the early 1900s, it was determined through further experimentation that the number of electrons released was proportional to light intensity (measured in candlepower- now the candela) and that the energy of the electrons was dependent on the incident light frequency f (or wavelength λ as they are related by the equation f = c/λ where c is the speed of light). That this could not be explained by classical physics was the impetus for Albert Einstein to propose what is now the fundamental theory of light. He posited that light could be considered as particles (now called photons) instead of waves and that these particles could penetrate an atom and collide with and impart enough energy to its electrons for them to escape from their orbit around the nucleus. The paper he wrote in 1905, entitled ”On a Heuristic Viewpoint Concerning the Production and Transformation of Light” was the basis for the award for the Nobel Prize in physics in 1922. His work stimulated the then nascent field of quantum theory promoted by the Danish physicist Niels Bohr who conceived the atomic model of electrons orbiting the nucleus in discrete energy levels called quanta. [4]

Physics also establishes the inherent limitations of solar panels because the photoelectric effect only occurs according to inviolate rules. Incoming solar energy must have sufficient intensity at the appropriate frequency to remove one of the outer shell or valence electrons of an atom to become part of the electrical current flow output of the solar panel. Electrons occur around the nucleus in discrete orbits that are separated into discrete quantum energy levels. The photoelectric effect in semiconductors can only be understood in terms according to the rules of quantum mechanics. An incoming photon of light with sufficient frequency and intensity strikes an electron, knocking it from the valence energy band into the conduction energy band; literally a quantum leap. However, the electron must then make its way through the rest of the atoms in the panel to reach the surface, expending energy with every encounter. Einstein called this the work function with the symbol omega (ω). The work function varies with many factors, notably the surface condition of the material, its purity, and what is called the packing arrangement of its atoms in crystalline form. The optimization of the amount of electricity that can be extracted from sunlight must take these factors into account. [5]

The use of the chemistry of semiconductors and the physics of the photoelectric effect to produce electricity requires engineering, the practice of putting scientific knowledge to practical use. Engineering is the bridge from the laboratory solar or photovoltaic cell to a practical solar cell that can be used as part of a fielded electrical power supply system. The era of solid state electronics started at Bell Laboratories in the 1950s, the epitome of electrical research and development rivaled only by Thomas Edison’s Menlo Park for its relevance to modernity. William Shockley was hired just after World War Two to lead the efforts to expand on prewar research that had led to the discovery of what were called P type for “positive” and N type for “negative” silicon semiconductive materials. The serendipity of chance discovery here played a key scientific role. Shipments of silicon that had been received at Bell Labs from various manufacturers were found to have different properties leading to the hypothesis that the differences were caused by impurities. Further experimentation revealed that P type silicon was contaminated with boron, just below silicon in the periodic table (Group III) and that the N type silicon had phosphorous, just above silicon in Group V. On Friday the 13th of April 1945, Shockley drew a diagram in his lab notebook for a P-N junction which he called “a solid state valve drawing small control current” that could be used for “controlling the flow of electricity in a conducting path.” [6] The solid state transistor to control current in an electrical circuit that he imagined was the harbinger of the information age.

Solar cells followed using the same P-N junction principle. Here the object is not to amplify and otherwise control electrical current but to make electricity out of sunlight. The key to doing this was a matter of materials engineering using different combinations of semiconductor materials with different additives called dopants to improve efficiency―the amount of electrical energy out relative to the amount of sunlight energy in. For single silicon cells, the maximum theoretical efficiency of 33.7 percent imposed by physics is called the Shockley-Queisser Limit with more than 50 percent of the sun’s energy lost as heat. The importance of doping is straightforward. Antimony from Group V with five valence electrons added to Silicon with four valence electrons yields one extra electron that can then be readily removed as current with both atoms having their “inert” configuration of covalent bonds. Bell Labs produced the first operating silicon solar cell in 1954 with an efficiency of 6 percent. [7]

Solar cells for spacecraft became the first practical application of photovoltaic technology. The International Geophysical Year of 1957 to 1958 was initiated in 1950 by scientists from across the globe to promote scientific cooperation. The US and the USSR announced plans to launch earth satellites in 1955. The US program consisted of two publicly announced and progressed projects: Vanguard, a three-stage rocket designed by the Naval Research Laboratory and Explorer to be launched on a missile designed by the US Army Ballistic Missile Agency. The Soviets were mum until the surprise launch of Sputnik, the world’s first artificial satellite, on 4 October 1957 followed by Sputnik 2 one month later carrying a dog named Laika. The Vanguard I launch collapsed in a huge fireball, which the press dubbed “Flopnik” in December. The Explorer was launched successfully in January. The new and improved solar cell powered Vanguard II was launched on 17 March 1958; it is still in orbit. [8] The Vanguard solar cells had a total power of one tenth of a watt in an array of one tenth of a square meter, the equivalent of 1 watt/m2 with and efficiency of 10 percent. They only work out as far as the orbit of Jupiter where the sun’s radiant energy fades. Beyond that, nuclear cells that produce their own radiation from radioactive decay become necessary.

Solar cell technology advanced as an integral part of the space race between the US and the USSR in the second half of the twentieth century. With design constraints that necessitated minimum weight and surface area due to payload launch constraints, aerospace applications favored higher power density cells without regard to unit cost. The key parameter is specific power, which is watts per kilogram. By using multiple layers of solar cells with different materials to take advantage of different wave lengths of incident solar radiation, efficiencies of over 45 percent have been achieved. The subsequent world-wide roll out of solar cell technology was precipitated by the need for stand-alone powering capabilities where transmission lines would not reach or where batteries were too expensive to install and maintain. Ironically, the oil and gas behemoth Exxon-Mobil provided part of the funding to develop affordable solar cells using lower grade silicon and cheaper materials to drive the cost from $100 to $20 per watt. The motivation was to provide power for remote pumping stations and off shore rigs primarily for signal and alarm systems. The cheaper cells made it cost effective for the US Coast Guard to implement solar cells to replace batteries on ocean buoys and for railroads to upgrade to wireless solar cell signaling systems. The closing decades of the twentieth century raised the ante for solar cells with the advent of roof-top panels for buildings and solar powered pumps for irrigating far flung fields. [9]

The twenty-first century opened with the inconvenient truth that the Industrial Revolution had an unintended consequence. The United Nations Environmental Programme established the Intergovernmental Panel on Climate Change (IPCC) in 1988 to provide “an assessment of the understanding of all aspects of climate change, including how human activities can cause such changes and can be impacted by them.” The Third IPCC Assessment Report at the turn of the century was clarion call to action, confirming that over the course of the twentieth century, temperature had risen 0.6°C, snow and ice cover had fallen by 10 percent leading to an average sea level rise of 15 centimeters, and that precipitation had increased by 5 percent. [10] The search for carbon free energy on a global scale was on and photovoltaics was in the crosshairs of innovative engineering. Cost would be the determinant figure of merit. To manufacture and install solar panels in acres of arrays to generate electricity at a cost per watt comparable to fossil fuel became the “over the rainbow” goal. After a decade delay that followed the post 9/11 global war on terror and the financial meltdown that followed, the US government was finally able to focus on climate.

The crux of the economics issue is that high efficiency solar cells are expensive and cheap solar cells are inefficient. To be affordable as an integral part of an energy grid of the future requires solar cells to be both cheap and efficient. Silicon is the semiconductor of choice because it is abundant and therefore cheap; it is second only to oxygen as the most common element in the earth’s crust (28.2 percent). However, raw silica must be chemically treated to convert it into a crystalline form that will conduct electricity. Silicon PV cells are made by cutting crystalline silica into thin slices that are doped to produce the PN junction of a diode with metallic contacts to conduct the photon generated current flow. The crystal structure determines the efficiency of the cell. Single crystal cells are the most efficient but they are more expensive to manufacture than cells with multiple crystals. The efficiency of the best commercial solar cells using single crystal cells is about 20 percent. This can be improved by adding additional cells that are designed to capture photons at different frequencies. When these are combined in a single panel, known as multijunction cells, efficiencies of nearly 50 percent can be achieved. These PV cells are at the efficient but expensive end of the spectrum. At the opposite end of the spectrum are thin film solar cells that are applied to a substrate of metal, glass, or plastic that can be flexible to allow for contoured surface installations. Thin film solar cells trade off efficiency for expense. The ultimate goal of any successful solar cell is to produce electricity at the lowest dollar per watt value after all factors, including installation, maintenance, replacement, and materials cost, are included in the calculation.[11]

That achieving the right balance between cost and efficiency would be difficult was evident early on. The Energy Policy Act of 2005 empowered the Department of Energy (DOE) to “spur commercial investments in clean energy policies that use innovative technologies” through the use of federal loan guarantees to private companies. Solyndra, a California company that had developed copper indium gallium di-selenide thin-film solar cell technology seemed a sure bet and was richly endowed with federal funding. The technology worked to reduce power cost but the economics didn’t―they were unable to compete with conventional, flat silicon solar panels. When the company went bankrupt two years later it was considered the “first serious financial scandal of the Obama Administration.” When the dust settled, it was generally concluded that the government’s ability to pick technology winners was inherently flawed; federal funding should be directed at research and development with the marketplace promoting viable technologies. Bloomberg News concluded that “If the Solyndra debacle gets U.S. policy pointed in the right direction, the loan-guarantee losses won’t have been totally in vain.” [12]

The Advanced Research Projects Agency for Energy (ARPA-E) was established and funded in 2009 to advance “high-potential, high-impact energy technologies that are too early for private-sector investment” using the Defense Department DARPA model that pioneered the Internet. Of the 46 ARPA-E energy research centers funded in 2010, 24 were working on solar energy issues. These initiatives are rightly in the areas of basic research to try to develop a solar cell that is easy to manufacture from cheap materials with sufficient efficiency to be cost competitive. Basic research is long term by its nature with failures outnumbering the rare success by an order of magnitude. Among the programs in the works are Solar Agile Delivery of Electrical Power Technology (ADEPT) to improve PV performance to Full-Spectrum Optimized Conversion and Utilization of Sunlight (FOCUS) to expand the range of solar cells to encompass a broader bandwidth of solar radiation frequencies. It goes without saying that a mnemonic acronym is nearly a prerequisite for government funded programs. While there have been no eureka breakthroughs to date, there is every reason to hope that there will be. [13]

While a super solar cell may be in the offing at some point, there is something to be said for Adam Smith’s tried and true economies of scale. Spaceship Earth is not payload limited like Vanguard rockets. Manufacturing myriad, large, cheap solar panels in an assembly line manner to cover large swaths of surface area is sure to drive the cost per unit down, just as it did pins according to Smith’s dictum. In 2006, one of the world’s largest semi-conductor manufacturers embarked on a program to manufacture garage door sized glass panels coated with thin films of amorphous silicon, a focused attempt to sacrifice efficiency for size to lower the dollar per watt cost. The assembly line process started with 60 ft2 glass panels precoated with a thin metal oxide film run on a conveyor belt through an automatic laser scribe to define the boundaries of 216 individual cell panels. Three layers of amorphous silicon that each absorb light from different parts of the spectrum were robotically added sequentially using vapor deposition. With the addition of metal contacts and a junction box, the panels were ready for shipment. With a cost of $3.50 per watt that was projected to decrease to $1.00 per watt as production ramped up, the prognosis for large scale arrays was sanguine. [14] The company shut down the assembly line in 2010 due to lack of demand. [15]

The difficulties with manufacturing solar cells in the United States to satisfy market demand at both the high efficiency, high cost and low efficiency, low cost ends of the spectrum is indicative of a global economic megatrend. Solar panel supply and demand imbalance is a microcosm of the effects of China’s manufacturing juggernaut. The Chinese produced 85 percent of all solar panels sold across the world in 2022 with almost the entire balance from other Asian-Pacific (APAC) nations, mostly Vietnam. The United States and Europe produced less than one percent each. This contrasts with the total of 1,000 terawatts (TW) of PV panels installed globally in 2022 with 50 percent in China and APAC and about 17 percent in both Europe and in North America. This sounds like a lot of power, but it is only about 15 percent of the total renewable capacity of 7,500 TW which is only about 10 percent of the global energy supply. This means that solar energy comprises only one percent of world total electrical generation. The 150 gigawatts of solar energy added in 2021 was a record amount; it is one third of the average annual addition in PV power needed annually over the next decade to meet the goal of carbon neutrality in 2030. [16]

Returning now to the original thesis that the sun produces ample energy to empower human enterprise many times over. Even if PV cell chemistry and physics could be engineered imaginatively into cheap and efficient solar panels, two intractable problems remain: diurnal and seasonal sunlight as an energy source and a lack of a repository to store electricity generated as supply that exceeds immediate use demand. Most of the industrial world is geographically situated between 30 and 50 degrees north of the equator. This means that the 1,000 watts per square meter that falls on the equator at midday is reduced to about 600 watts per meter in the industrial zone. It is only midday at noon, so the overall energy delivered must be also discounted by half to 300 watts per meter to account for mornings and afternoons. Cloud cover, which in some locations like the UK amounts to more than half the day on average, results in a diminution of solar cell production by an additional factor of ten. The net effect is that the actual amount of solar energy that impinges on panels ranges from about 100 watts per square meter in Germany and New York to 200 watts per square meter in Spain and Texas. With commercial solar cell efficiency at 10 percent and unlikely to ever exceed 20 percent, the output electricity is only about 10 to 20 watts per square meter. [17] This means that Gargantuan solar panel “farms” are needed to provide for a city size load in the gigawatt (GW – billions of watts) range. These are only likely to be economical in those areas that are closer to the equator and are relatively near to the cities they supply. The largest solar farm in the world is in the desert state of Rajasthan just west of Delhi, India covering 14,000 acres producing just over two gigawatts. The largest facility in the United States is in California with 579 MW (0.6 GW) covering 3,000 acres. The largest solar farm in the state of Delaware produces 15 MW on 80 acres.

It is conceivable that enough solar panel mega farms could be built in some places to make enough electricity to meet demand when the sun shines. But what do you do at night and during winter? And what do you do when PV power supply exceeds grid demand? The answer to both questions is energy storage. Saving the excess current of PV cells during cloudless, sunny days in summer to be used at night and over the winter is the Achilles’ heel of renewable energy. Long-duration energy storage (LDES) is the collective name for methods, both real and imagined, that seek to alleviate the renewable storage problem. Rechargeable batteries cannot store energy on a large enough scale because they have low energy density, a short life cycle, and, ultimately, cost too much. The most well-established LDES technology is pumped-storage hydropower, the name a literal description of its modus operandi. Excess renewable electricity produced is used to pump water from a low elevation catch basin to an elevated reservoir. The stored potential energy is converted back to electricity on windless nights by water turbines. There are also proposals to use the excess solar power electricity to make hydrogen gas with electrolysis. One may conclude that, while solar energy may be one of many technologies that will need to be employed to reduce fossil fuel demand, it is hardly a panacea.

References:

1. Laughlin, R. Powering the Future, Basic Books, New York, 2011, pp 91-93.

2. Petrucci, R. General Chemistry, Principles and Modern Applications, Macmillan Publishing Company, New York, 1985. Pp 198-203, 364-401.

3. Semiconductors and Insulators, Theory of, Encyclopedia Britannica, Macropedia 15th Edition William Benton, Chicago, Illinois, 1974, Volume 16, pp 522-529.

4. Marton, L. “Photelectric Effect” Encyclopedia Britannica, Macropedia 15th Edition William Benton, Chicago, Illinois, 1974, Volume 14, pp 296-300.

5. Neamon, D. Semiconductor Physics and Devices, McGraw Hill Boston, MA, 2003. p 104-106. http://www.fulviofrisone.com/attachments/article/403/Semiconductor%20Physics%20And%20Devices%20-%20Donald%20Neamen.pdf

6. Riordan, M. Crystal fire: the invention of the transistor and the birth of the information age, W.W. Norton Company, New York, 1988. pp 97-113.

7. Smil, V. Energy in Nature and Society, MIT Press, Cambridge, MA, 2008, pp 255-257

8. https://www.nasa.gov/feature/65-years-ago-the-international-geophysical-year-begins

9. Perlin, J. “Late 1950s – Saved by the Space Race”. SOLAR EVOLUTION – The History of Solar Energy. The Rahus Institute. http://californiasolarcenter.org/old-pages-with-inbound-links/history-pv/

10. Climate Change 2001 Synthesis Report, Third Assessment Report of the Intergovernmental Panel on Climate Change, Cambridge University Press, Cambridge, UK. 2001.

11. https://www.energy.gov/eere/solar/solar-photovoltaic-technology-basics

12. Lott, M. “Solyndra — Illuminating Energy Funding Flaws?” Scientific American. September 27, 2011.

13. https://arpa-e.energy.gov/technologies/programs

14. Bourzac, K. “Scaling up Solar Power” MIT Technology Review, March/April 2010, pp 84-86.

15. Kanellos, M. “Applied Materials Kills its SunFab Solar Business”. Greentech Media 21 July 2010.

17. MacKay, D. Sustainable Energy – without the hot air UIT, Cambridge, UK, 2009 pp 38-49.